That viral video you just shared? It might never have happened.

Midjourney, Sora, and dozens of other AI generators now are producing images so realistic that it is actually difficult to differentiate what is real and what is fake. We are not discussing apparent glitches any longer but artificial content that deceives expert eyes.

The bad news is as follows: MIT researchers discovered that individuals cannot recognize AI-created images more than 4 out of every 10 times. That is almost a fifty-fifty. And bad actors and propagandists and scammers know it. Counterfeit images are now contributing to romance frauds as well as to the actual cyberwarfare and world war

This guide cuts through the noise. You’ll learn how to actually detect AI-generated images and videos using practical observation techniques, the detection tools worth your time in 2026, step-by-step verification workflows, and honest talk about what these methods can, and can’t catch.

What Are AI Generated Images and Videos

How AI Creates Fake Visuals

Type a sentence. Get a photorealistic image. That’s how easy it’s become.

Tools like Midjourney and Sora don’t need cameras or actors. They generate faces, places, and events entirely from code. Everything looks authentic because these systems studied millions of real-world examples before creating their own.

Two years ago, you’d spot a fake instantly. Today, even professionals get fooled.

Why AI Content Is a Growing Problem

Fake spreads fast. Truth crawls behind it.

Social platforms don’t verify what gets posted. Users don’t either. One synthetic image can rack up thousands of shares before anyone thinks to question it. By then, the lie has done its job.

How often do fakes slip through? MIT says humans miss AI-generated images over 40% of the time. That’s a huge gap—and bad actors know exactly how to exploit it.

Scams, misinformation, reputation attacks, cyber warfare. All powered by content that never happened.

Real Risks You Should Not Ignore

The risks of not knowing how to detect AI-generated images & videos are serious. For example:

- Fake ads can trick users into scams

- Identity manipulation can lead to fraud

- Misinformation can influence public opinion

Therefore, even businesses and governments face risks. AI content can affect decisions and reduce trust. This reflects broader global trends in technology and power (US vs China Tech War).

Why You Can’t Afford to Ignore This

Think detection skills are just for journalists and fact-checkers? Think again.

Consider what’s already happening: investors lose money on fake “leaked” product images, parents panic over fabricated school emergency videos, businesses face PR nightmares from synthetic “evidence” of misconduct, and everyday people get blackmailed with AI-generated compromising content.

None of these victims expected to be targeted. All of them wished they’d spotted the fake sooner.

AI-generated content will only get more convincing. Learning detection now means you’re building immunity before the next wave hits, not scrambling to catch up after you’ve already been fooled.

How to Detect AI-Generated Videos: Tools & Verification

Fake videos used to be easy. Bad lip-sync, robotic movements, weird lighting. Not anymore.

Modern deepfakes pass casual inspection without breaking a sweat. Catching them means combining visual analysis with detection tools and source checks. Let’s break it down.

1. Look for Visual Clues

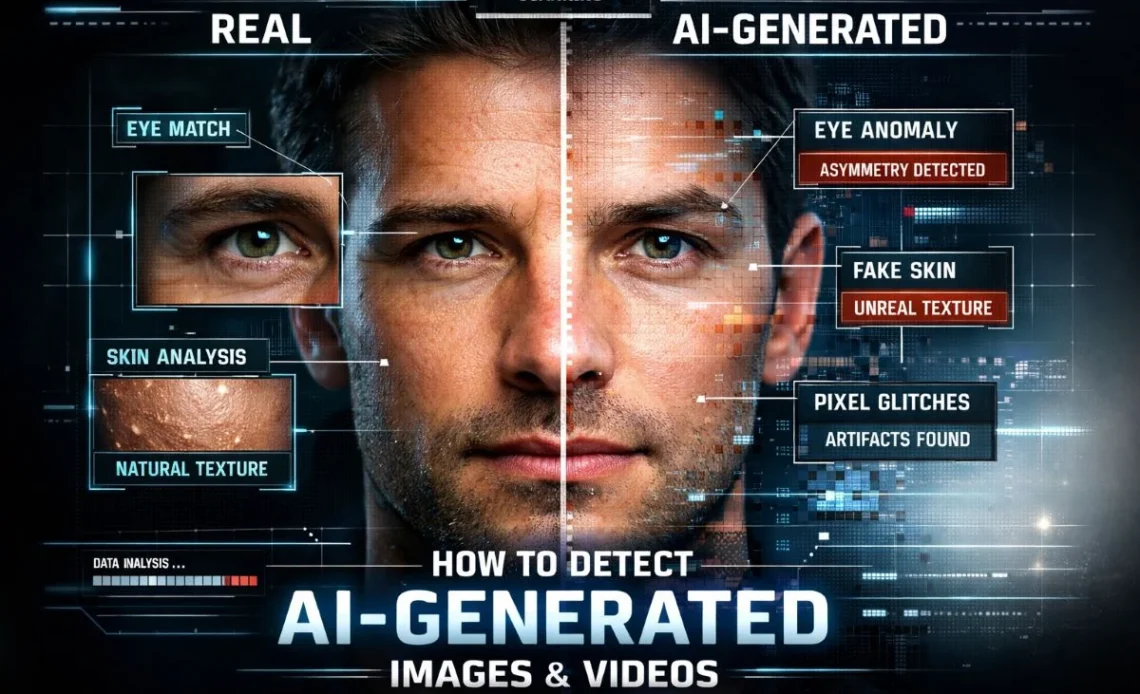

Even advanced AI can leave subtle errors in videos. Therefore, you should watch for:

- Facial irregularities – misaligned eyes, inconsistent expressions, or distorted features

- Body movements – robotic gestures or unnatural posture

- Background inconsistencies – warped objects, changing shadows, or unusual reflections

- Blinking patterns – irregular or missing eye blinks

As a result, by carefully observing these clues, you can often spot AI-generated content before even using detection tools.

2. Verify Audio and Lip Sync

AI videos often struggle with audio alignment. To detect issues:

- Lip-sync mismatches – speech does not match mouth movements

- Artificial voices – robotic tone or unnatural cadence

- Echo or strange sound transitions – common in synthesized speech

Therefore, using audio tools such as Adobe Premiere Pro or Audacity can help analyze audio consistency. Proper observation combined with tools increases your chances of detecting fake content.

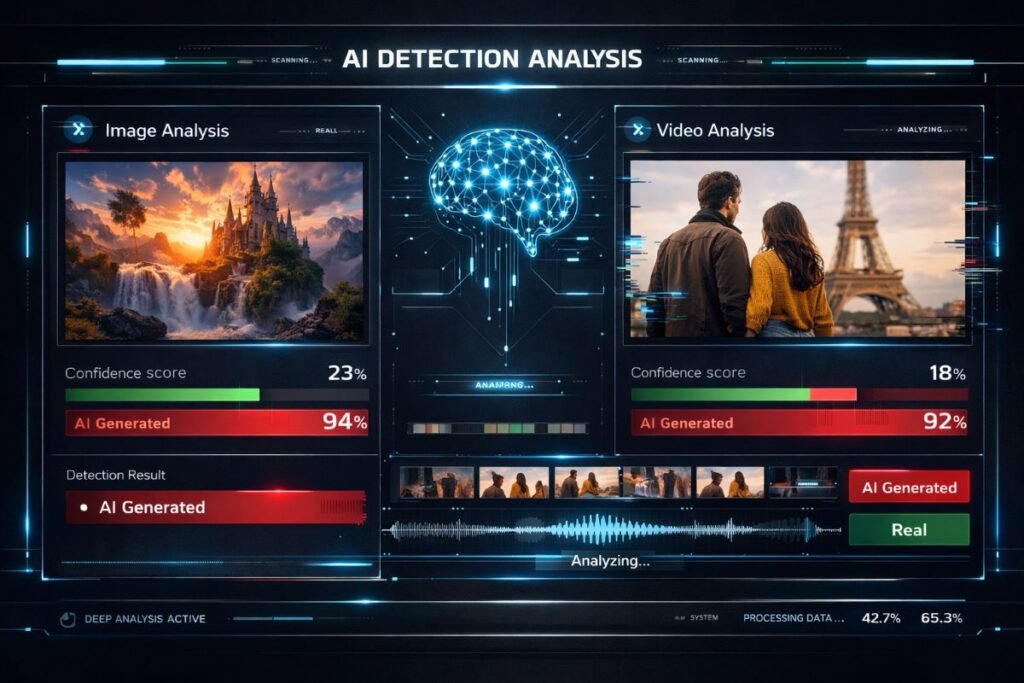

3. Use AI Detection Tools

Specialized AI detection tools make identifying fake videos much easier. Some reliable options in 2026 include:

- Hive AI Detector – analyzes both images and videos generated by AI

- Deepware Scanner – focused on deepfake detection

- Sensity AI – checks facial movements and video authenticity

Using these tools together enhances accuracy. According to MIT research, human observation alone fails in over 40% of cases, proving that detection tools are essential.

However, for enterprise applications, AI adoption is rising rapidly, making these tools even more critical (Top 5 AI Agent Tools for Enterprise Automation).

4. Check What’s Hidden in the File

Most people ignore file metadata. Big mistake.

Right-click any image, open properties, and look for camera info, GPS coordinates, and timestamps. Real photos have this stuff. AI-generated ones? Usually blank or filled with nonsense.

Watch for mismatched dates too. A “breaking news” photo created three weeks ago? Dead giveaway.

Also trace the source. If someone claims a video came from an official account, go check that account. Not there? Fake.

For example, viral videos may circulate widely on social media, but if they are missing on credible platforms, treat them with caution (Iranian Women’s Soccer Team Asylum Crisis).

5. Cross-Check Across Multiple Sources

Validating content using multiple sources reduces the risk of being misled. You can:

- Check news coverage on reputable platforms

- Compare the video with real events or images

- Use reverse image search on key frames of the video

Therefore, cross-referencing ensures you do not rely solely on manipulated AI content.

6. Why One Method Isn’t Enough

Every detection tool has blind spots. Every manual check has limits. That’s just reality.

Smart verification means layering techniques—eyeball the visuals, check file metadata, use detection software, and trace the source. One method might fail. All four together? Much harder to fool.

Where Does This Leave Us?

Detection skills have become digital self-defense.

The tools exist. The techniques work. But they require something most people skip: actually using them consistently. Train yourself to pause, investigate, and verify before reacting.

AI content creation isn’t slowing down. Your ability to see through it shouldn’t either.

Advice From Digital Forensics Pros

Question everything that looks too perfect or too outrageous.

Real life is messy. Real photos have flaws. When something looks impossibly polished or hits emotional buttons too precisely, dig deeper.

Make verification a habit—not extra work. Spotted something fishy? Reverse image search takes five seconds. Checking metadata takes ten. Bellingcat’s verification guide walks through these exact steps if you want a deeper breakdown.

Follow credible fact-checkers. Groups like AFP, Reuters, and Bellingcat publish findings openly. Use their expertise.

And when you’re unsure? Don’t share. Sitting on questionable content costs you nothing. Spreading a fake costs your credibility.

Expert Advice Worth Following

Perfection should make you suspicious.

Real cameras capture real flaws. Grain, blur, awkward shadows, that’s normal. An image without any of that? Probably fake.

The FBI, NSA, and CISA released a joint advisory on this exact problem. Their takeaway: one detection method isn’t enough. Stack them.

Still can’t tell if it’s real? Don’t share. Better safe than embarrassed.